Reliability becomes the new frontier of AI.

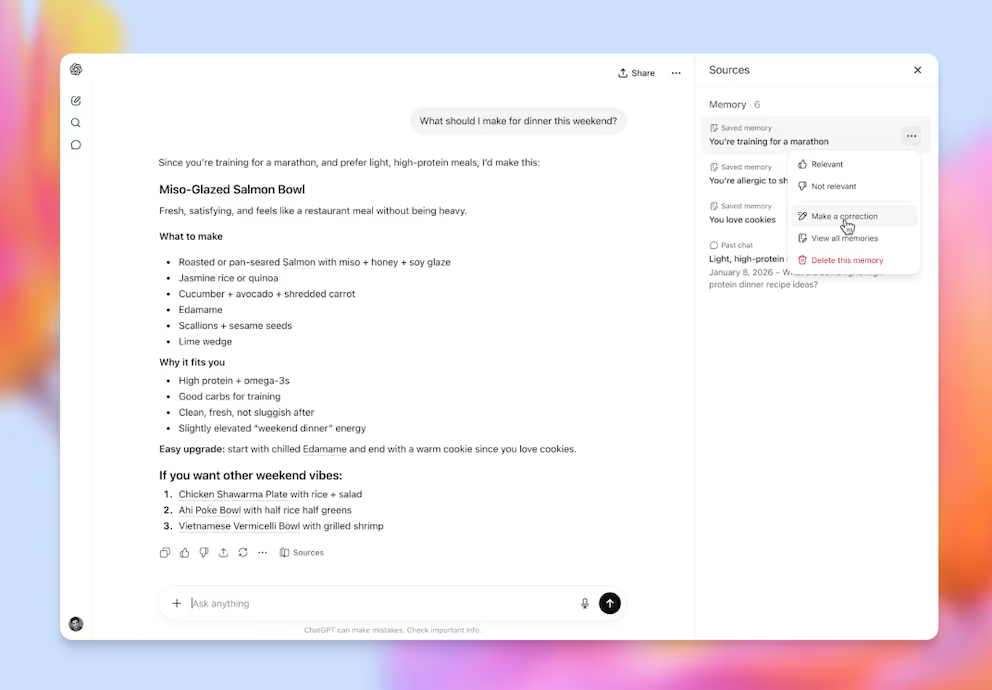

San Francisco, May 2026. OpenAI is pushing a more reliable generation of artificial intelligence for sensitive areas such as health, law and finance, where mistakes are not merely technical defects but potential sources of harm, liability and institutional distrust. The promise is not only better answers, but better boundaries in domains where users need guidance without confusing probabilistic language for professional authority.

The move reflects a deeper tension in the AI industry. Models are becoming more capable, but capability alone does not solve the problem of judgment. In medical, legal and financial contexts, an answer can sound confident, persuasive and organized while still being incomplete, outdated or dangerously misapplied.

OpenAI’s challenge is therefore reputational as much as technological. If artificial intelligence is to enter regulated knowledge systems, it must show stronger factual discipline, clearer uncertainty, safer escalation and better refusal behavior when a question requires a licensed professional. Trust will not be built through speed, but through restraint.

The stakes are structural. A chatbot that helps draft an email is one thing; a model that influences a cancer question, a divorce dispute or an investment decision occupies a different category of power. In those cases, the interface becomes a gatekeeper between public anxiety and institutional expertise.

The next phase of AI will be judged less by spectacle and more by accountability. OpenAI is signaling that the market no longer wants only smarter systems; it wants systems that know when not to overreach. In the most sensitive fields, the future of artificial intelligence may depend on whether it can earn the right to be trusted without pretending to replace human responsibility.

Detrás de cada dato, la intención. / Behind every data point, the intention.