Authorities in multiple regions are tightening regulatory oversight of foreign artificial intelligence systems in response to perceived risks to data protection, national security and technological sovereignty.

Brussels, Belgium.

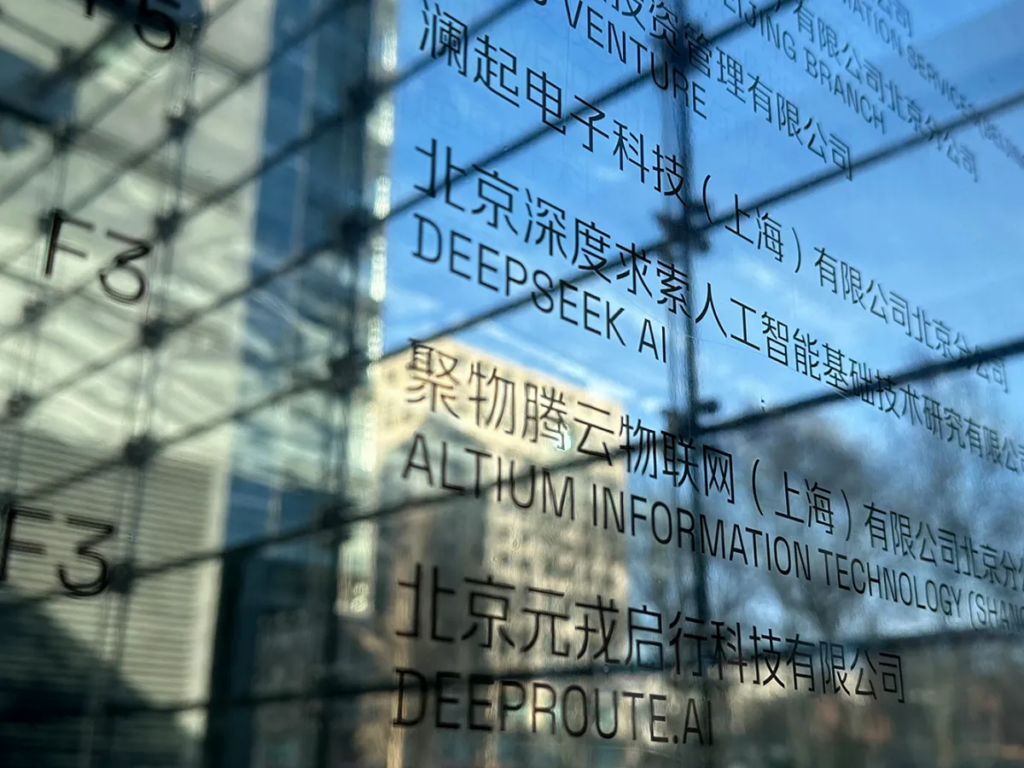

Regulatory authorities in several European and Asian countries have broadened prohibitions and restrictions on the use of DeepSeek, a Chinese-developed artificial intelligence platform, as part of a growing global effort to address perceived security vulnerabilities associated with foreign AI technologies. The expanded measures reflect heightened scrutiny of data governance, potential access to sensitive information and dependencies on externally controlled systems that could, according to officials, affect national interests and individual privacy. These developments underscore the complex interplay between technological innovation, regulatory policy and geopolitical considerations in the governance of advanced digital tools.

European regulators have cited concerns about cross-border data flows and the robustness of privacy protections when AI platforms manage or process personal information. Given DeepSeek’s architecture and its integration with data sources, authorities have questioned whether existing safeguards are sufficient to prevent unauthorized access or misuse of information. These assessments build on broader European priorities related to data protection, digital autonomy and the strategic resilience of technological ecosystems, prompting decisions to limit procurement of such systems by public institutions and critical infrastructure entities.

In parallel, several Asian governments have reviewed the deployment of DeepSeek within their jurisdictions, evaluating potential implications for national security and economic competitiveness. Some administrations have imposed constraints on the use of foreign AI in sectors deemed sensitive, including defense-related research, public services and strategic industries. Officials participating in these reviews have emphasized the importance of establishing clear regulatory frameworks that balance innovation with accountability, particularly as artificial intelligence becomes more deeply embedded in decision-making across both public and private sectors.

Industry analysts note that concerns about AI security are not limited to any single region or technology provider. Instead, they reflect a broader reevaluation of how governments manage risks associated with powerful digital platforms whose operational logic and data interactions can extend beyond national borders. In this context, authorities aim to mitigate potential vulnerabilities by enforcing compliance with local standards, fostering transparency in algorithmic design and requiring robust controls over data residency and access.

The expanded restrictions on DeepSeek are also influenced by geopolitical dynamics, where technology governance becomes a component of broader strategic competition. In some cases, tensions between states shape perceptions of foreign-developed technologies, leading to more cautious approaches to adoption and collaboration. Policymakers argue that technological sovereignty — the ability of a state to control the tools, standards and infrastructures that underpin its digital economy — is central to long-term economic security and resilience, motivating tighter scrutiny of externally developed AI systems.

At the same time, technology companies and industry groups have called for dialogue between regulators and developers to clarify compliance expectations and to explore pathways for addressing security and privacy concerns without compromising innovation. These discussions highlight the difficulty of crafting policies that protect national priorities while enabling access to cutting-edge tools that can drive economic growth, public services and scientific advancement.

For organizations that have previously implemented DeepSeek, the expanded restrictions pose operational and strategic challenges. Some entities are now evaluating alternative providers, adjusting procurement strategies or accelerating investments in domestic AI capabilities. The shift underscores how regulatory actions can influence market dynamics and encourage diversification of technology supply chains.

Critics of the expanded measures argue that overly restrictive policies could hinder access to beneficial AI innovations or fragment global technology standards, potentially creating barriers to collaboration and slowing the pace of development. They emphasize that risk management should be proportional and based on evidence, while encouraging interoperability and shared governance practices across jurisdictions.

As regulatory landscapes evolve, governments face the ongoing task of balancing security, economic interests and technological openness. How authorities refine their frameworks in response to emerging risks will shape the deployment of AI systems and influence the broader trajectory of digital transformation.

Detrás de cada dato, hay una intención. Detrás de cada silencio, una estructura.

Behind every data point, there is an intention. Behind every silence, a structure.